Date:

Share post:

Over the last year, AI has transformed recruiting faster than even the most vigilant leaders expected.

While AI has enabled efficiency, faster screening, broader reach and better matching, it has also given rise to massive candidate fraud amid a flood of mass-generated resumes. AI in hiring has quickly become a risk management challenge with serious legal, reputational and operational implications.

As organizations adopt AI tools to manage the unprecedented volume of applicants, they are discovering a sobering reality: Automation has lowered barriers for those intent on sophisticated fraud.

A recent survey of senior talent acquisition executives conducted by the Institute for Corporate Productivity (i4cp) found:

See also: Workplace lying is at crisis levels; time for deception detection?

For senior HR executives, the issue is no longer whether to use AI in hiring, but how to utilize it responsibly amid escalating candidate fraud and a rapidly shifting regulatory landscape. To further complicate things for global companies, AI hiring risk looks fundamentally different outside the United States—particularly in the European Union.

Under the EU Artificial Intelligence Act, AI systems used for recruitment, candidate screening, interviewing, performance evaluation, or termination decisions are explicitly classified as high‑risk systems. This designation triggers mandatory requirements that go far beyond current U.S. norms, including formal risk management programs, documented impact assessments, bias testing, human oversight, audit‑ready technical documentation and ongoing monitoring.

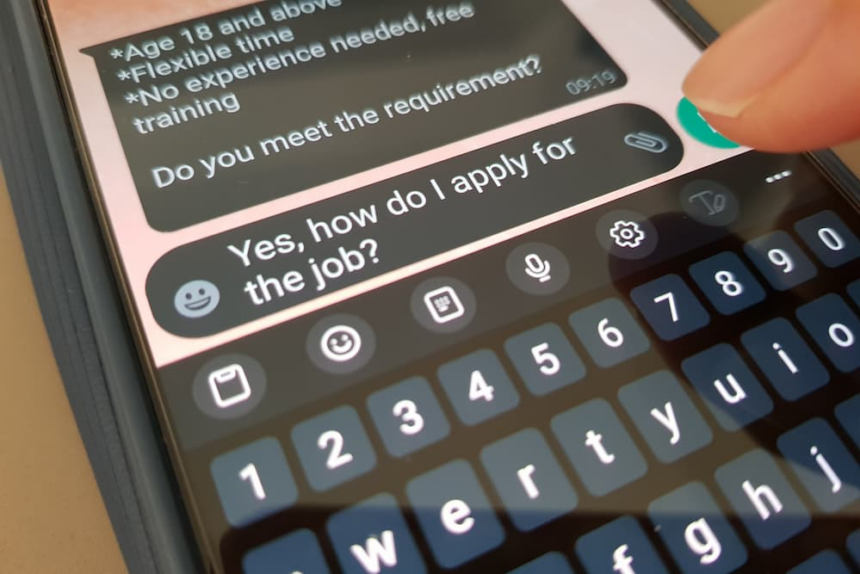

Employers are drowning in resumes, many of them AI‑generated. Recruiters report being overwhelmed, while qualified applicants struggle to stand out. In response, companies have leaned even more heavily on AI‑enabled screening and assessment tools, often without fully accounting for the risks that accompany them.

More troubling still, the i4cp survey found that many employers acknowledge they have not established structure or governance around the use of AI in hiring. Most (56%) indicated that their organizations are in the “awareness/ad hoc” phase of AI risk management maturity.

For HR leaders, this convergence of applicant volume, sophisticated deception and lack of governance creates real exposure—not only to hiring the wrong person, but to legal liability and cybersecurity threats.

Fraudulent candidates introduce risks that extend far beyond poor performance. Negligent hiring claims remain one of the most significant legal exposures for employers, particularly when background checks are inadequate or inconsistent. Courts have repeatedly held employers liable when they “knew or should have known” that an employee posed a risk at the time of hire—an increasingly easy standard to meet now that fraud risks are well documented.

The implications escalate further when fraudulent hires gain access to sensitive systems or data. Law enforcement has warned that fake IT workers—sometimes tied to foreign criminal or state‑sponsored actors—have infiltrated U.S. companies to steal intellectual property, exfiltrate customer data, or deploy ransomware. In regulated industries, liability may extend to third parties if customer or patient data is compromised.

In short, AI‑enabled fraud collapses the traditional boundaries between HR, Legal, IT, Privacy and Security. Senior HR leaders can no longer treat hiring controls as an operational detail; they are part of the organization’s risk architecture.

Complicating matters is a fragmented regulatory environment. At the federal level, enforcement related to disparate impact claims has narrowed, and prior guidance on AI in employment has been rolled back. Recent court decisions emphasize higher evidentiary thresholds for proving discrimination based on statistical disparities alone.

But for employers, this is not a green light—it is a trap.

States are rapidly filling the void with aggressive new laws governing AI in hiring and employment decisions. New York requires annual bias audits and public disclosures for automated employment decision tools. California has finalized sweeping regulations governing automated decision systems, including strict data retention requirements and prohibitions on discriminatory outcomes. Colorado now requires employers to exercise “reasonable care” to prevent algorithmic discrimination, backed by significant civil penalties.

For multi‑state employers, compliance is not about meeting a single federal standard. It requires designing AI governance to satisfy applicable laws in multiple states. To avoid liability, employers must satisfy the requirements of the most restrictive jurisdiction in which they operate, often before regulators or courts establish how these new laws should be interpreted and applied.

As states pass new, untested laws, the potential for liability is massive. Laws providing for set penalties for simple violations are a recipe for class action liability. Recent job posting disclosure laws have already led to hundreds of lawsuits, often resulting in six- or seven-figure payments to plaintiffs.

One of the clearest messages for senior HR leaders is this: avoid black‑box hiring tools. If your organization cannot explain how an AI system evaluates candidates—what data it uses, how it was trained, and how decisions are made—you will struggle to defend it to regulators, courts, or your own board of directors.

Executives should expect AI vendors to provide documentation on training data, bias testing, auditability and regulatory compliance. Contracts with AI tool vendors must include audit rights, clear data‑use limitations and meaningful indemnification provisions. A vendor’s reluctance to provide transparency is itself a risk signal.

Importantly, HR leaders must also ensure that applicant data is protected. Privacy liabilities are expanding, particularly in California, where applicant and employee data receive full privacy protections. Mishandling resumes, video interviews, IP addresses, or inferred data can trigger fines, litigation and reputational damage.

Despite the advanced risks, many of the most effective defenses remain grounded in fundamentals. Camera‑on interviews, in‑person meetings where feasible, verified references, consistent background checks and zero‑tolerance policies for intentional misrepresentation matter now more than ever.

AI detection tools should be used cautiously. Poorly trained detection systems can introduce bias or trigger legal obligations of their own. Simple procedural safeguards—like requiring candidates to perform basic movements during video interviews—can be surprisingly effective without introducing new regulatory risk.

Most importantly, HR leaders must act quickly when fraud is suspected: suspend access, preserve data, involve IT and Legal immediately, and investigate thoroughly. Delay increases exposure.

For senior HR executives, the path forward requires balance: leveraging AI’s legitimate benefits while building governance equal to its risks. This is not about slowing innovation—it is about ensuring that innovation does not outpace accountability and legal compliance.

222 Lakeview Ave Suite 800 West Palm Beach FL 33401

© 2026 HR Executive. All rights reserved.

The hidden risks of AI-driven hiring: How can HR leaders stay ahead? – HR Executive

Leave a Comment